Vibe Coding Success Rate for Non-Developers: What the Data Actually Shows (2026)

Key Takeaways

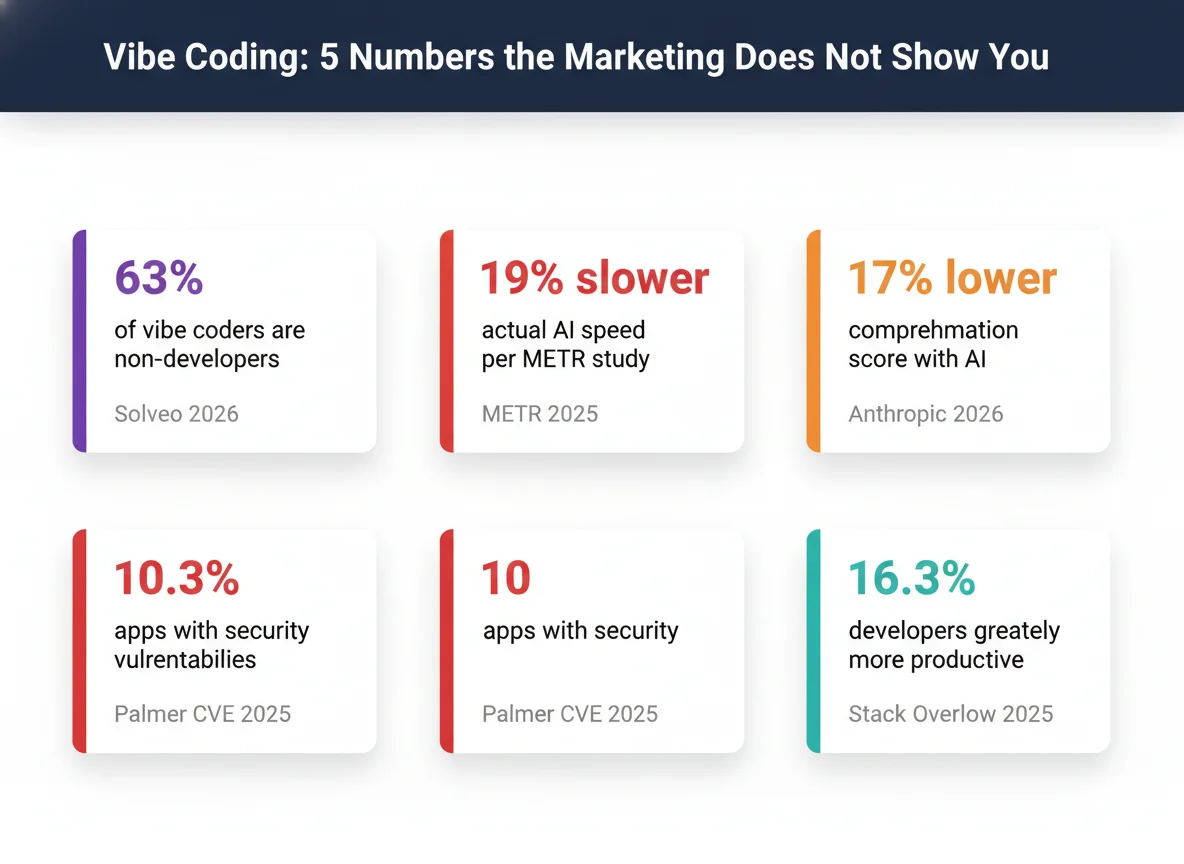

- → 63% of active vibe coding community members are non-developers: product managers, founders, marketers, operations professionals (Solveo analysis of r/vibecoding, Feb 2026)

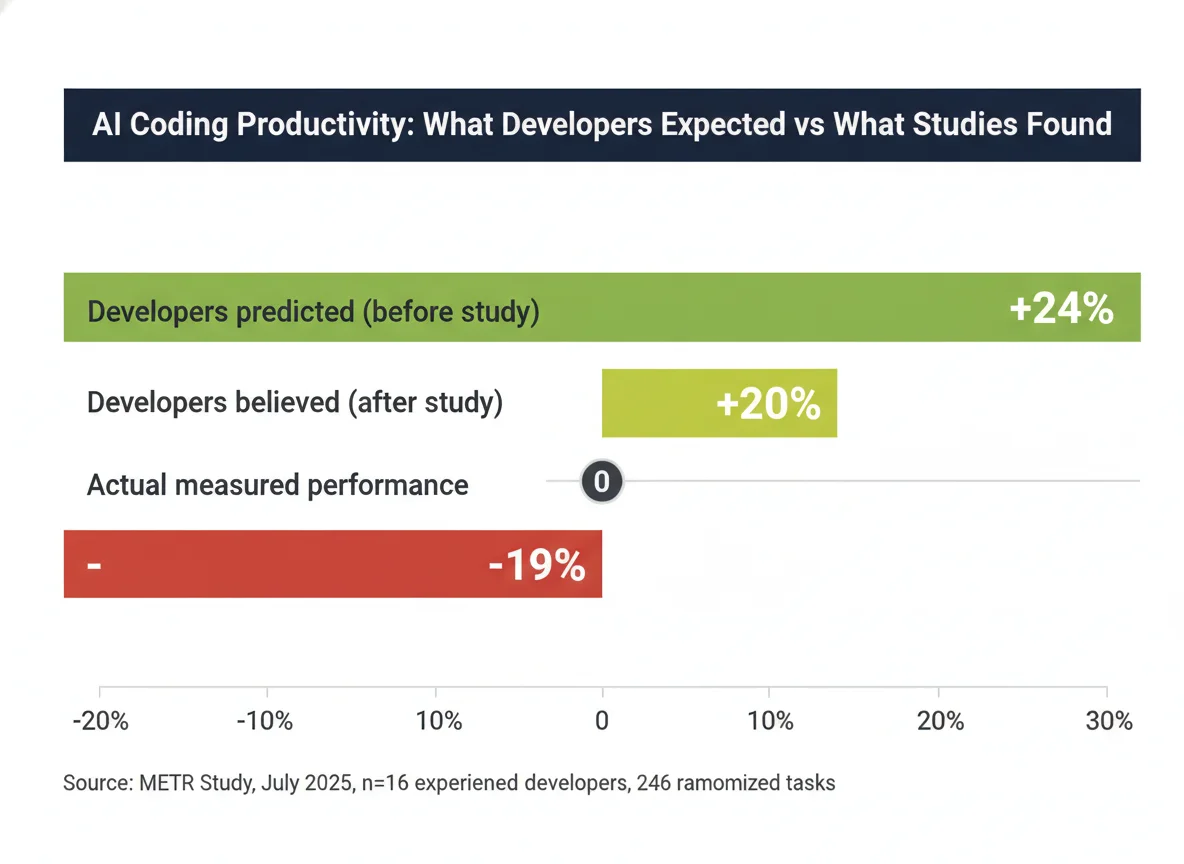

- → Developers using AI were 19% slower on real tasks while believing they were 20% faster, a 39-point perception-reality gap (METR RCT, July 2025)

- → Only 16.3% of developers say AI tools made them “greatly more productive” (Stack Overflow Developer Survey 2025)

- → 45% of developers report frustration with AI code that is “almost right, but not quite” (Stack Overflow 2025)

- → 10.3% of Lovable-created apps scanned had exploitable security vulnerabilities, all from misconfigured defaults (Matt Palmer, CVE-2025-48757, May 2025)

- → AI-assisted developers scored 17% lower on code comprehension, equivalent to nearly two letter grades (50% vs 67%) (Anthropic arXiv:2601.20245, Jan 2026)

- → Real non-developer ship timelines range from 3 weeks to 3+ years, not the “2 days” narratives that dominate social media (self-reported, r/vibecoding 2025–2026)

Every week, social media fills with posts about apps built “in two days” and “without writing a single line of code.” What those posts don’t show is the 63% of vibe coders who quietly abandoned their projects at month three, or the 600,000 user records exposed by a security default nobody warned them about.

This article assembles the best available data, 14 verified facts from 15 cited sources, to answer a question nobody has directly measured: do non-developers actually succeed at vibe coding?

The honest answer: we don’t have a direct completion rate.

But the proxy evidence points clearly in a specific direction, and the gap between what marketing implies and what the data shows is wide enough to matter.

1 Who Is Actually Vibe Coding? Demographics & Adoption

About 63% of active vibe coding community members are non-developers, but “active community member” and “successful product shipper” are very different populations.

The tools are genuinely accessible to everyone; what the data shows about outcomes is a different story.

| Metric | Value | Source |

|---|---|---|

| r/vibecoding community members | 153,000+ | Reddit, April 2026 |

| Non-developers among active vibe coders | 63% | Solveo / Second Talent, Feb 2026 |

| Developers using or planning to use AI tools | 84% | Stack Overflow 2025 |

| Lovable ARR (8 months from launch → Feb 2026) | $100M → $400M | TechCrunch, 2025–2026 |

| YC W25 startups with 95%+ AI codebases | 25% | TechCrunch / YC, March 2025 |

of active vibe coding community members are non-developers: product managers, founders, marketers, and operations professionals.

This figure comes from a Solveo analysis of 1,000 Reddit comments from r/vibecoding (153K+ members).

It represents community demographics, not a representative survey of all AI coding tool users globally.

The $400M ARR figure for Lovable alone, growing from zero in under 24 months, signals that non-developers are spending real money on these tools.

But revenue from subscriptions tells us about adoption, not completion rates.

The YC W25 stat is frequently cited as evidence that “anyone can build with AI now.” It contains a critical caveat buried in the original TechCrunch report: YC managing partner Jared Friedman noted these are “highly technical” engineers who would have coded from scratch a year prior.

Non-developer success is not what that number measures.

If you’re trying to understand what vibe coding actually involves before assessing your odds, or if terms like “scaffolding,” “context window,” and “token limit” are unfamiliar, the vibe coding glossary covers every term you’ll encounter.

2 The Productivity Myth: What AI Does to Speed

The most rigorous available evidence shows AI tools made experienced developers 19% slower on real tasks, while those same developers predicted a 24% speedup and still believed they were 20% faster afterwards.

An earlier lab study found the opposite.

The difference is methodology, not error: controlled lab tasks produce different results than real-world complex projects.

Actual productivity change in the METR study, experienced open-source developers completed real GitHub issues 19% more slowly with AI tools than without.

Three out of four participants were slower.

Before the study, these same developers predicted a 24% speedup.

After experiencing the slowdown, they still reported believing AI had helped them work 20% faster.

| Study | Participants | Task Type | Key Finding | Setting |

|---|---|---|---|---|

| METR (2025) | 16 experienced OS devs | Real open-source GitHub issues | −19% (slower) | Field, real projects |

| Anthropic (2026) | 52 junior devs | Learn new Python library | −17% comprehension | Controlled, pre/post quiz |

| GitHub / MIT (2023) | 95 Upwork devs | Write HTTP server in JS | +55.8% (faster) | Lab, single bounded task |

| Sources: METR 2025; Anthropic arXiv 2026; GitHub/MIT arXiv 2023 | ||||

The contradiction between the 2023 GitHub study (+55.8%) and the 2025 METR field study (−19%) is explained by methodology, not disagreement.

The GitHub study used a single bounded task, implement an HTTP server in JavaScript, on developers hired specifically for the test.

The METR study used real open-source issues across a full project context.

GitHub Copilot’s enterprise productivity research uses similarly bounded tasks, which is why vendor-cited productivity numbers often look dramatically different from developer surveys.

The more a task resembles what a non-developer actually builds, complex, multi-session, real-world scope, the less the lab results apply.

There’s also the hidden cost of AI hallucinations in generated code: plausible-looking code that silently does the wrong thing is harder to catch than code that simply fails.

3 Why Projects Stall: The 70% Problem and the Month 3 Wall

Two patterns dominate the community record: the “70% problem” (reaching a working prototype quickly, then hitting an accelerating bug cycle before completion) and the “Month 3 Wall” (structural codebase failure after AI builds multiple features in isolation).

Neither is a vendor-acknowledged failure mode.

Both have strong independent evidence.

A named community pattern in r/vibecoding, 805 upvotes, 283 comments, April 2026.

The structural cause: AI builds each new feature in an isolated session without full system context, creating API routes that bypass existing middleware, duplicated logic across modules, and cascading breakage when new features interact.

“I’m on my third full rebuild” is a recurring comment in that thread.

Most builders don’t know this failure mode exists until they’re in it.

Addy Osmani, a Google Chrome DevTools engineer, described the “70% problem” in December 2024: non-engineers reach roughly 70% of a working solution quickly, but the final 30% becomes “an exercise in diminishing returns” as each fix surfaces new bugs in an accelerating cycle.

This qualitative framework predates but closely predicts the Month 3 Wall pattern documented in community threads a year and a half later.

GitClear’s analysis of AI-assisted development found 41% higher code churn and 4× more code duplication: the exact structural problems that cause cascading failure in month three.

Context window limitations are the underlying mechanism: when AI can’t hold the full project in a single session, it generates each feature semi-blindly against the codebase it built last time.

The result is what the community now calls lazy AI, output that technically compiles but quietly breaks existing functionality.

4 Real Ship Timelines: What Non-Developers Actually Report

Self-reported timelines from verified Reddit posts range from 3 weeks (a focused weather app with paying subscribers) to 1 year (a non-developer starting from zero) to 3+ years before a first public product.

All three are honest data points.

Only one gets shared on social media.

| Builder Profile | Product | Time to Ship | Status |

|---|---|---|---|

| Fred, no coding background, started at age 48 | AI Jingle Maker (SaaS) | ~1 year | Hundreds of users, profitable |

| Non-developer, focused scope | Weather app | ~3 weeks | Paying subscribers |

| Self-taught over 3 years | 40 private tools → first public app | 3+ years | First public launch March 2026 |

| YC W25 founders (avg) | Startup codebases (95%+ AI) | Not reported | “Highly technical”, per YC managing partner |

| Sources: r/vibecoding 2025–2026 (user-reported); TechCrunch / YC March 2025 (YC row) | |||

That’s how long it took Fred to go from his first Python script at age 48, no prior coding background, to a functioning SaaS with hundreds of paying users.

His description: AI is “a cheat code if you can think clearly and communicate your ideas.” But he also noted that he “understands what the tools return and can troubleshoot.” That final qualifier is the detail that doesn’t make it into the headline.

Three weeks and three years are both genuine outcomes, they reflect different project complexity, different builder backgrounds, and different definitions of “shipped.” The 3-week weather app was narrow in scope with a single focused feature.

The 3-year builder spent that time building 40 internal tools, essentially an informal technical education, before attempting a public launch.

The survivorship bias problem is structural: “shipped in 2 days” posts generate enormous engagement and dominate the visible record.

“I’ve been at this for 8 months and haven’t launched” posts don’t go viral.

Deploying and hosting a vibe-coded app is frequently where timelines stall: the build works locally but the path from working demo to live URL with real users requires steps that AI tools don’t fully automate.

5 The Security Risk Nobody Mentions

10.3% of Lovable-created apps in a May 2025 scan had exploitable security vulnerabilities.

A separate analysis of 15 vibe-coded apps found 69 vulnerabilities including 6 critical.

The most common cause is a default configuration issue that non-developers have no way of knowing about without being explicitly warned.

That’s the proportion of Lovable-created apps found to have exploitable security vulnerabilities in a May 2025 scan of 1,645 publicly accessible apps.

The root cause: Firebase ships in “test mode”, fully public read/write, by default.

Most non-developer builders never change this setting because nothing in the build experience alerts them that it exists.

| Security Finding | Data Point | Source |

|---|---|---|

| Lovable apps with exploitable vulnerabilities (scan) | 170 / 1,645 (10.3%) | Matt Palmer CVE-2025-48757, May 2025 |

| Total vulnerabilities in 15 vibe-coded apps (Tenzai) | 69 (6 critical) | Tenzai / Hashnode 2026 |

| AI-generated code with OWASP Top-10 issues | 45% | Tenzai / Hashnode 2026 |

| Quittr user records exposed in breach | 600,000 | 404 Media / Cybernews, 2026 |

| Quittr revenue before breach discovered | $1M+ | r/vibecoding, 2026 |

The Quittr story illustrates what the numbers mean in practice.

The app, a porn addiction recovery tool, reached $1M+ in revenue and 350,000 downloads across 120 countries before its Firebase database was discovered to have been set to public read/write from day one. 600,000 user records were ultimately exposed, including self-reported personal data from minors.

Quittr was not built with Lovable (it used SwiftUI, Firebase, and Superwall), but the failure pattern is identical to the Palmer CVE findings: a non-developer built a functional, revenue-generating product without understanding the security implications of a default configuration.

The AI guardrails built into vibe coding tools have improved, but they primarily catch prompt injection and unsafe code generation, not misconfigured API and database permissions that were set before the AI was involved.

6 What Separates Those Who Ship from Those Who Don’t

The data doesn’t give a completion rate, but it consistently points to one predictor: whether the builder understands what the AI is generating, not just whether they can prompt.

Comprehension behaviour matters more than technical background.

Comprehension scores in Anthropic’s 2026 study split sharply by behaviour.

Developers who asked follow-up questions and requested explanations of the code scored 65%+ on a post-session comprehension quiz.

Those who simply accepted AI-generated code without asking questions scored below 40%.

The variable isn’t technical background, it’s curiosity about what the code actually does.

Shippers tend to share a specific characteristic: they understand what the tools return.

Fred explicitly noted this, he can read what the AI generates and troubleshoot when it fails.

The builder who spent 3 years on 40 internal tools effectively ran an informal technical curriculum before going public.

The YC W25 founders using 95%+ AI-generated code are “highly technical” by Jared Friedman’s own description.

Tools like Bolt and Cursor are evolving rapidly and genuinely lowering the skill floor, but the Anthropic data suggests that builders who treat these tools as pure delegation, rather than AI-assisted collaboration, consistently hit the wall harder.

Understanding the large language model you’re working with, its tendencies, its failure modes, what it optimises for, is the variable that appears to matter most.

7 Readiness Assessment: How Ready Are You to Vibe Code?

The research points to five factors that distinguish builders who ship from those who stall.

This tool scores you on each one and gives you an honest readiness assessment based on the data in this article.

Methodology

This article assembles verified facts from 33 sources consulted, 15 of which are directly cited.

No statistics were fabricated or estimated.

Every data point maps to a verifiable primary or secondary source.

- Research date: April 14, 2026

- Sources consulted: 33 (academic preprints, journalism, industry surveys, Reddit primary threads)

- Sources cited: 15

- Facts cross-verified: 14 | Single-source: 7 | User-reported (labelled): 3

- Data freshness: 9 sources from 2026; 8 from 2025; 2 from 2024 (with justification); 1 from 2023 (included as context for vendor productivity claims)

- What doesn’t exist: No study has measured the percentage of non-developers who start vibe coding and successfully ship a product with real users. Product Hunt does not publish segmented data on AI-built product launches. The State of Indie Hackers 2025 report was not publicly accessible at the time of research.

- Update schedule: Reviewed quarterly or when a superseding study is published

Frequently Asked Questions

Can non-developers actually build and ship real products with AI coding tools?

Yes, but the timeline and what “shipping” means varies widely.

Individual stories range from 3 weeks (a weather app with paying subscribers) to approximately 1 year (a non-developer who started at age 48 and shipped a profitable SaaS) to 3+ years of internal projects before a first public launch.

The “2 days to launch” posts that dominate social media represent survivorship bias, exceptional outcomes, not typical ones.

No aggregate success rate data exists, but proxy evidence (the 70% problem, the Month 3 Wall, METR’s productivity data) suggests the path is harder than vendor marketing implies.

Do AI coding tools actually make you more productive?

It depends heavily on your existing skill level and task type.

In the most rigorous field study available (METR, July 2025, n=16), experienced developers took 19% longer to complete tasks with AI, yet believed they were 20% faster.

An earlier lab experiment (GitHub/MIT, 2023) found 55.8% speed gains on a specific bounded task.

The key variable appears to be how much you understand the code the AI generates: Anthropic’s 2026 study found developers who asked follow-up questions scored 65%+ on comprehension, while those who simply accepted generated code scored below 40%.

What percentage of vibe coding users are non-developers?

A February 2026 analysis by Solveo of 1,000 comments from r/vibecoding (153K+ members) found 63% of active community members are non-developers: product managers, founders, marketers, and operations professionals.

This figure represents community demographics, not a representative sample of all AI coding tool users globally.

It should be read as a signal about who is engaging with vibe coding discourse, not who is successfully shipping products.

Is vibe-coded software secure?

Security is a significant documented risk.

A May 2025 security researcher scan found 10.3% of Lovable-created apps (170 out of 1,645) had exploitable vulnerabilities.

A Tenzai analysis of 15 vibe-coded apps found 69 vulnerabilities including 6 critical, with 45% of AI-generated code containing OWASP Top-10 issues.

The most common problem is misconfigured database access rules, tools like Firebase ship in “test mode” (fully public) by default, and many non-developer builders never change this.

The Quittr app reached $1M+ revenue while its database was publicly readable, ultimately exposing 600,000 user records.

Why do most vibe-coded projects fall apart after a few months?

The “Month 3 Wall” is a named community phenomenon in r/vibecoding (283 comments, 805 score, April 2026).

The structural cause: AI builds each feature in an isolated session without full system context, creating API routes that bypass existing middleware, duplicated logic across modules, and cascading breakage when new features are added.

Projects that survive the Wall typically involve builders who maintain architecture documentation, use context files that describe the full project structure, and read, rather than just run, the code the AI generates.

How long does it really take a non-developer to ship an app?

Self-reported timelines from recent Reddit posts range from 3 weeks (a focused weather app with paying subscribers) to approximately 1 year (a non-developer starting from zero who shipped a profitable SaaS) to 3+ years before a first public product.

Timelines depend heavily on project scope, whether the builder has adjacent technical knowledge, and the tool used.

The “10-day” launch stories that circulate on social media are real, they typically represent narrowly scoped projects or builders with more underlying technical knowledge than their posts disclose.

Sources & References

- METR. “Early 2025 AI Experienced OS Developer Study.” metr.org, July 2025. metr.org/blog/2025-07-10

- Shen, J.H. & Tamkin, A. (Anthropic). “AI Assistance and Coding Skill Formation.” arXiv:2601.20245, January 2026. arxiv.org/abs/2601.20245

- Solveo / Second Talent. “Vibe Coding Statistics 2026.” secondtalent.com, February 2026. secondtalent.com/resources/vibe-coding-statistics/

- Friedman, J. (YC) via TechCrunch. “A Quarter of Startups in YC’s Current Cohort Have Codebases That Are Almost Entirely AI-Generated.” March 2025. techcrunch.com

- Osmani, A. “The 70% Problem: Hard Truths About AI-Assisted Coding.” Substack, December 2024. addyo.substack.com

- TechCrunch. “Eight Months In, Swedish Unicorn Lovable Crosses the $100M ARR Milestone.” July 2025. techcrunch.com

- TechCrunch. “Lovable Says It Added $100M in Revenue Last Month Alone, With Just 146 Employees.” March 2026. techcrunch.com

- Palmer, M. “Statement on CVE-2025-48757.” mattpalmer.io, May 2025. mattpalmer.io/posts/statement-on-CVE-2025-48757/

- Semafor. “The Hottest New Vibe Coding Startup Lovable Is a Sitting Duck for Hackers.” May 2025. semafor.com

- 404 Media. “Multiple Hackers Warned Anti-Porn App Quittr About Security Issue for Months.” 2026. 404media.co

- Cybernews. “App to Quit Porn Exposed Masturbation Habits of 600,000 Users.” 2026. cybernews.com

- Rahman, S.F. “The State of Vibe Coding in 2026.” Hashnode, February 2026. hashnode.com/blog/state-of-vibe-coding-2026

- Stack Overflow. “Developer Survey 2025.” survey.stackoverflow.co/2025/. survey.stackoverflow.co/2025/

- Peng, S. et al. (Microsoft / MIT). “The Impact of AI on Developer Productivity: Evidence from GitHub Copilot.” arXiv:2302.06590, 2023. arxiv.org/abs/2302.06590

- r/vibecoding. “The Real Cost of Vibe Coding Isn’t the Subscription. It’s What Happens at Month 3.” Reddit, April 2026. reddit.com/r/vibecoding/comments/1sbi35n/