Best AI Coding Tool for Non-Developers: Which One Actually Ships? (2026)

Key Takeaways

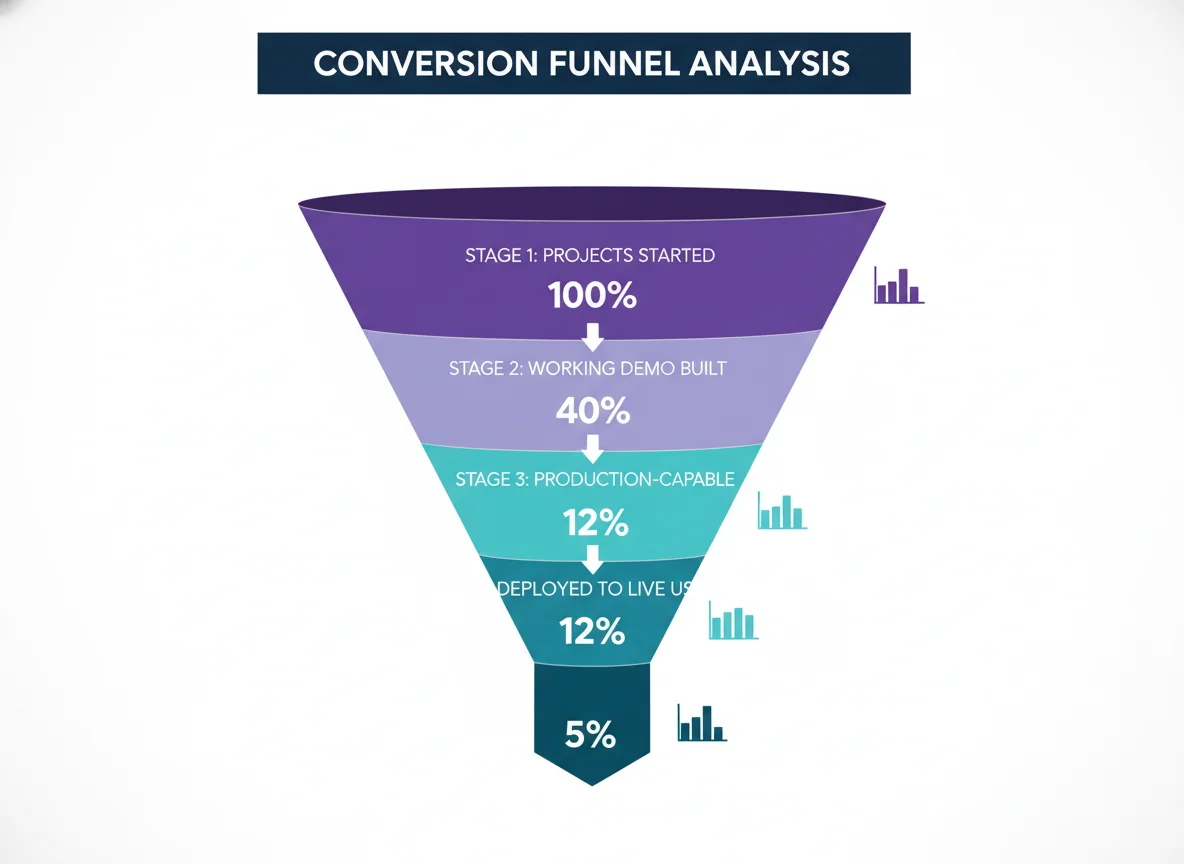

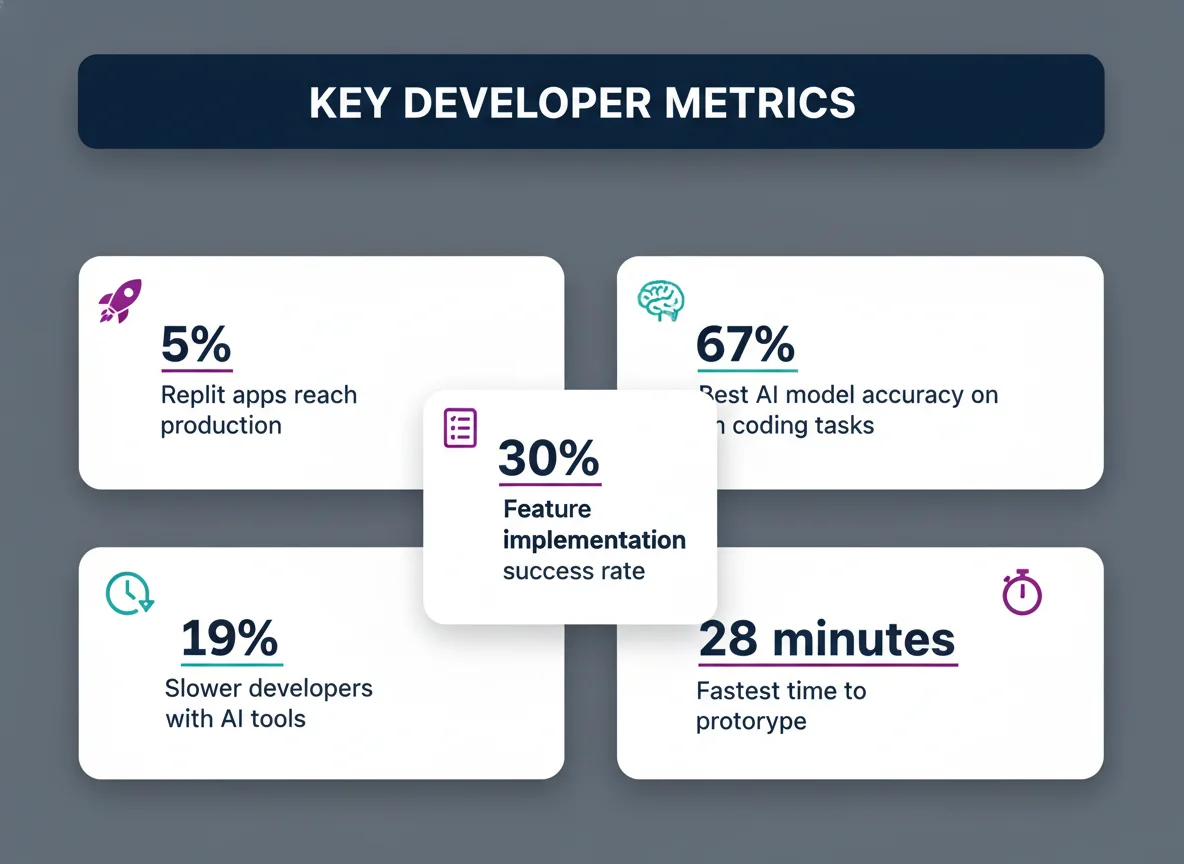

- → On Replit, 5% of AI-generated apps reached production in 2025, the clearest published proxy for shipping rate on any vibe coding platform (Replit platform data)

- → Lovable users created 25 million projects by Q4 2025; an estimated 12% reached production-capable status (shipper.now analysis, 2026)

- → Even the top AI model fails one-third of real coding workflows, VibeCodingBench v1.1 peak accuracy is 67.42%, with authors noting “reliable end-to-end app generation is still unsolved” (vals.ai / arXiv 2603.04601, 2026)

- → FeatBench (2025) found all evaluated AI agents break existing code when adding new features, with a peak resolution rate of just 29.94% (arXiv 2509.22237)

- → Production readiness ranking: Cursor >> Replit > Lovable > Bolt. Accessibility ranking is the exact reverse. (hellocrossman.com, March 2026)

- → Bolt.new loses context and overwrites files beyond 15–20 components, making it unsuitable for anything past a demo (r/nocode, March 2026)

- → Payment integration AI completion rate: 12.5%, versus 71.25% for tasks with no external services (FeatBench, arXiv 2509.22237)

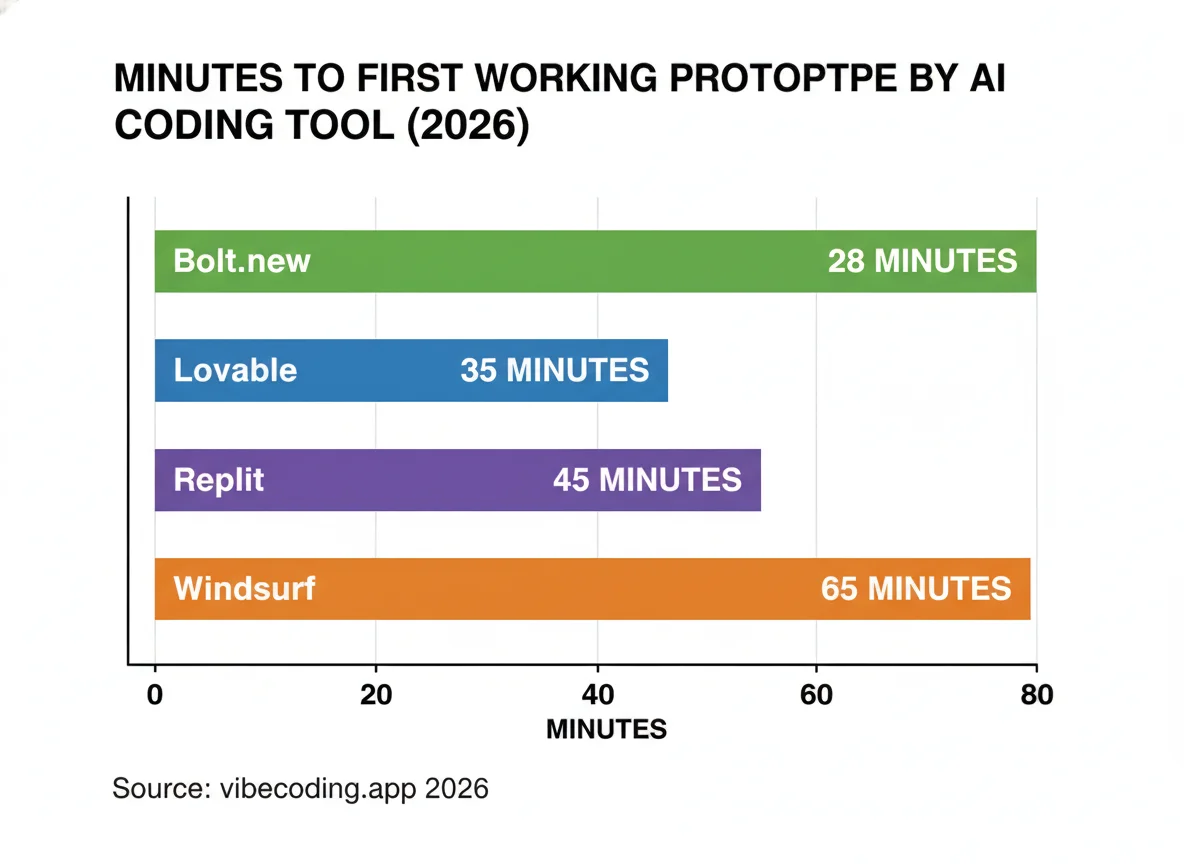

- → Time to first working prototype: Bolt 28 min, Lovable 35 min, Replit 45 min, Windsurf 65 min (vibecoding.app, 2026)

Every week, a new comparison article tells you which AI coding tool has the best interface, the most generous free tier, or the cleanest-looking output. None of them answer the only question that matters for vibe coders: which tool actually gets non-developers to a live product with real users?

This analysis draws on academic benchmarks, platform-level deployment data, and first-hand multi-tool tests to answer that question. The findings reframe the comparison entirely, the tools easiest to start with are consistently the hardest to ship with, and the gap between “I built a demo” and “I deployed a product” is far wider than vendor marketing suggests.

1 The Deployment Gap: From Project Started to Live Product

Published data from Replit shows only 5% of AI-generated apps were deployed to production in 2025. Separately, Lovable users created an estimated 25 million projects, of which roughly 12% reached production-capable status. Both figures are the clearest available evidence that starting a project and shipping one are vastly different outcomes.

The vibe coding industry reports adoption numbers enthusiastically. What it does not report is completion rates.

Replit’s Ghostwriter AI v2 created over 5 million apps in 2025, according to data cited by index.dev. Of those, 250,000 were deployed to production via one-click hosting, a 5% deployment rate.

Lovable’s scale is even larger. By Q4 2025, users were generating over 100,000 projects daily, pushing the total project count to approximately 25 million. Analysis by shipper.now estimates that around 3 million of those reached production-capable status, roughly 12%.

Both figures carry caveats: Replit’s 5% covers all users (not exclusively non-developers), and Lovable’s 12% is an analyst estimate derived from separately reported numbers.

What they confirm, directionally, is that the gap between “I started building” and “I shipped something” is the real story, and it is not visible in any tool’s marketing.

Academic benchmarking adds further context. VibeCodingBench v1.1 found that even the top-performing AI model (GPT 5.4) achieved only 67.42% accuracy on real-world coding workflows, prompting the benchmark authors to note: “reliable end-to-end app generation is still unsolved.” For non-developers working on harder-than-benchmark tasks, real-world failure rates are higher still. Understanding what predicts success for non-developer vibe coders matters more than any feature comparison.

2 Head-to-Head: Speed, Quality, and Cost Compared

Bolt.new reaches a working prototype fastest (28 minutes), but produces the lowest code quality (6/10) and breaks down beyond 15–20 components. Lovable is close behind on speed but degrades with iteration. Replit is slower to start but has the strongest self-correction loop. Cursor is fastest to production-grade output but requires development knowledge.

| Tool | Time to Prototype | Code Quality | Monthly Cost (Pro) | Accessibility (Non-Dev) | Production Readiness |

|---|---|---|---|---|---|

| Lovable | 35 min | 7/10 | £25/mo | ★★★★★ Highest | Medium |

| Bolt.new | 28 min | 6/10 | £20/mo | ★★★★☆ High | Low–Medium |

| Replit | 45 min | 7/10 | £25/mo base | ★★★☆☆ Medium | Medium–High |

| v0 by Vercel | — | 9/10 | Token-based | ★★☆☆☆ Low–Med | Medium (UI only) |

| Cursor | — | Highest | £20/mo flat | ★☆☆☆☆ Lowest | ★★★★★ Highest |

| Sources: vibecoding.app 2026; hellocrossman.com March 2026; Medium/@aftab001x 2026. Prices in GBP approximate equivalent; check current pricing at each platform. | |||||

The speed ranking flatters Bolt. Reaching a prototype in 28 minutes is only useful if that prototype can be iterated into something shippable. A structured look at what Bolt.new is actually built for reveals why speed at stage one does not translate to shipping at stage three.

Cost predictability matters as much as the headline price. Cursor charges a flat $20 per month regardless of usage. Bolt and Lovable use token- and credit-based systems that reward short, simple projects and penalise iteration. Replit’s community reports actual costs running 2–6x higher than advertised for complex projects.

3 Where Each Tool Breaks Down for Non-Developers

Every major AI coding tool performs well on first-version output. The divergence happens when non-developers try to change, extend, or debug what they have built. The failure modes are structural, not random, and they are confirmed by both user reports and academic research.

A vibe coder who spent $700+ and three months testing 11 AI app builders published a detailed breakdown in March 2026 on r/nocode. The findings for each tool are consistent with academic benchmark data.

| Tool | First-Version Quality | Specific Failure Point | Verdict for Shipping |

|---|---|---|---|

| Lovable | High | Fix-and-break cycle: one change breaks other pages; fixing burns a week of credits in a session | Good for demos; risky for iteration |

| Bolt.new | High (speed) | Context loss and file overwrites beyond 15–20 components; stability degrades sharply | Demo tool; not production |

| Replit | Functional | Slower initial build; higher cost variability; less polished UI output | Strongest for functional shipping |

| v0 | Excellent (UI) | Backend story remains weak; needs React knowledge; complex logic requires external tools | UI prototyping only |

| Cursor | Highest overall | Requires development knowledge and local IDE setup; non-developers hit walls they cannot debug | Best if you can code |

| Sources: r/nocode $700/11-tool test (March 2026); hellocrossman.com (March 2026); gocodelab.com (April 2026). | |||

The structural explanation comes from FeatBench (arXiv 2509.22237, September 2025). Researchers tested AI agents on 157 real-world feature implementation tasks across 27 active GitHub repositories. The central finding: “All evaluated agents tend to break existing functionalities when adding new features.” The best agent-model combination resolved only 29.94% of feature tasks.

VibeCodingBench v1.1 adds further context on what goes wrong. Analysing failures across models, the benchmark found that 46.7% of errors are “missing features” and 20.4% are authorisation issues. For non-developers building anything with user accounts, both failure types are extremely common. Understanding the complete picture of how vibe coding actually works before committing to a tool significantly reduces the likelihood of hitting these walls.

Replit’s self-correction loop offers a partial mitigation. Unlike Lovable and Bolt, Replit’s agent reads its own error outputs and attempts to fix them without the user needing to paste anything back into the chat. Replit also provides a built-in Postgres database, eliminating Supabase configuration, a common failure point for non-developers using other tools. Replit reduced its initial build time from 15–20 minutes to 3–5 minutes in December 2025, per its official blog.

4 What Non-Developers Can Realistically Build

The project type matters as much as the tool. Simple CRUD applications, landing pages, and portfolios are reliably achievable. Payment integration, real-time chat, and complex permissions consistently exceed what AI agents can handle without developer involvement.

Multiple independent comparisons converge on the same achievable-versus-challenging taxonomy for non-developers using current AI coding tools.

FeatBench measured this directly. Tasks with no external service integration had a 71.25% completion rate. Tasks requiring Stripe payment integration dropped to 12.5%. The implication: a non-developer building a paid product faces a failure rate of approximately 87.5% on the payment component alone.

| Project Type | Achievable Without Dev Knowledge | Notes |

|---|---|---|

| Landing page | ✓ Yes | All tools handle this reliably |

| Portfolio site | ✓ Yes | v0 produces best visual results |

| To-do / task app | ✓ Yes | Lovable and Replit both reliable |

| Internal dashboard | ✓ Yes | Replit strongest for data tools |

| Basic CRUD app | ✓ Yes | Depends on complexity of data model |

| Payment integration | ✗ Challenging | 12.5% AI completion rate (FeatBench) |

| Complex permissions | ✗ Challenging | Auth issues = 20.4% of AI failures |

| Real-time chat | ✗ Challenging | WebSocket complexity exceeds AI reliability |

| Multiple API integrations | ✗ Challenging | Each integration compounds failure probability |

| Sources: gocodelab.com April 2026; FeatBench arXiv 2509.22237 2025; VibeCodingBench arXiv 2603.04601 2026. | ||

Cursor is the exception for complex projects, but it requires existing development knowledge to use effectively. A deeper look at what Cursor is and who it is designed for makes clear it is not a beginner’s entry point, despite being the most powerful tool in this comparison.

v0 by Vercel produces genuinely excellent UI components, but requires React knowledge for anything beyond component generation and needs external tools for backend logic. It is most useful as a design layer, not as a standalone shipping tool for non-developers.

The broader context: 63% of vibe coding users identify as non-developers, according to Second Talent’s 2026 statistics aggregator (no primary source disclosed). If even half of those users attempt to build something with payment integration, the 12.5% academic completion rate implies a very large number of abandoned projects. The data on non-developer vibe coding success rates confirms that shipping rates diverge sharply from adoption rates.

5 The Real Cost of Iteration

Advertised monthly prices ($20–$25) represent the cost of building something simple once. Iterating beyond the first version, fixing bugs, adding features, debugging failed builds, multiplies costs on every token-based or credit-based platform. Cursor’s flat pricing makes it the only tool where iteration does not escalate cost.

Monthly Pro pricing in 2026: Cursor $20 flat, Bolt $20 (10M tokens), Lovable $25, Replit $25 base plus AI credits.

The gap between advertised and actual costs widens as projects grow. Lovable’s credit system penalises iteration because the fix-and-break cycle described in section 3 burns credits at an exponential rate during debugging sessions. One builder who tested 11 tools systematically reported spending a week’s worth of credits in a single session attempting to fix a broken login flow on Lovable.

The METR randomised controlled trial (July 2025) found that experienced developers using AI tools completed tasks 19% slower than expected, despite believing they were working 20% faster. That productivity paradox matters for non-developers attempting to budget time as well as money, the tools create a consistent impression of faster progress than they actually deliver. The mismatch between perceived and actual speed is one reason vibe coding projects run over both budget and timeline.

Cursor’s $20 flat rate is the exception. Because Cursor uses a subscription model rather than token consumption, iterating through bug cycles does not escalate cost. This structural difference partly explains why Cursor scores highest on production readiness despite being the least accessible entry point. It rewards exactly the iterative behaviour that other tools punish financially. For vibe coders thinking about the full cost of building and hosting a vibe-coded app, iteration cost is a frequently overlooked factor.

Methodology

This comparison draws on academic benchmarks, platform-level data, independent multi-tool tests, and community reports published between July 2025 and April 2026.

- Sources consulted: 27 URLs across academic papers (arXiv), reputable journalism (TechCrunch, Semafor), platform data (Replit official blog), quality blogs, and user-reported community tests

- Sources cited: 19 sources, all 2025–2026. No source older than July 2025 used.

- Data range: July 2025–April 2026

- Research date: 16 April 2026

- Limitations: No source directly measures the percentage of non-developers using each specific tool who ship a live product with users. Deployment and production-capable estimates are platform-level (covering all users, not non-developers only) and are proxy metrics, not direct shipping rate measurements. The 5% Replit figure derives from two separately reported numbers, not an explicitly stated percentage. The 12% Lovable figure is an analyst estimate.

- Update schedule: This article will be updated when new platform data or academic benchmarks are published that supersede current figures.

Frequently Asked Questions

Which AI coding tool is best for non-developers?

For ease of use, Lovable and Bolt are the most accessible, both offer chat-based interfaces with no local setup required. For projects that need to actually ship, Replit’s self-correction loop and built-in Postgres database give it an edge for functional apps. For production-grade quality, Cursor is the strongest but requires existing development knowledge to use effectively.

How many AI-generated apps actually get deployed to production?

The data points to a significant gap. On Replit, only 250,000 of 5 million AI-generated apps (5%) were deployed to production in 2025, according to data cited by index.dev. Separately, Lovable users created around 25 million total projects, with an estimated 3 million (approximately 12%) reaching production-capable status, per shipper.now analysis. Both figures cover all users, not non-developers specifically.

How long does it take to build an app with AI coding tools?

Time to a working prototype: Bolt.new is fastest at 28 minutes, followed by Lovable (35 minutes), Replit (45 minutes), and Windsurf (65 minutes), according to a 2026 benchmark by vibecoding.app. These are first-version times. Iterating beyond the initial build burns significantly more time and credits on all token- or credit-based platforms.

Why do AI-generated apps break when you add new features?

Research from FeatBench (arXiv 2509.22237, September 2025) found that all evaluated AI agents “tend to break existing functionalities when adding new features.” The best agent-model combination resolved only 29.94% of feature implementation tasks across 157 real-world tasks. This structural limitation, not a quirk of any specific tool, explains the fix-and-break cycles that non-developer vibe coders consistently report. The vibe coding glossary covers the key technical concepts behind context loss and why it causes these breakages.

What can non-developers realistically build with AI coding tools?

Reliably achievable without coding knowledge: to-do apps, landing pages, portfolio sites, CRUD applications, and basic dashboards. Consistently challenging without development experience: payment integration (AI completion rate drops to 12.5% per FeatBench), complex user permissions, real-time chat, large file processing, and multiple API integrations. Knowing your project type before choosing a tool is as important as the tool comparison itself.

How much do AI coding tools cost in practice?

Advertised Pro tier pricing (2026): Cursor $20/month flat, Bolt $20/month (10M tokens), Lovable $25/month, Replit $25/month base plus AI credits. In practice, heavy iteration multiplies costs: Replit community feedback reports actual costs running 2–6x higher than advertised for complex projects, and some Bolt users report spending over $1,000 on single debugging-heavy projects. Cursor is the only tool in this comparison where iteration does not escalate cost, because it uses flat-rate subscription pricing.

Is Cursor good for non-developers?

Cursor has the strongest production readiness of any tool in this comparison, but it is also the least accessible to non-developers. It requires existing development knowledge, a local IDE setup, and comfort with reading and editing code. Non-developers who attempt Cursor without that foundation consistently hit debugging walls they cannot resolve. A detailed breakdown of what Cursor is designed for explains why its architecture assumes a developer baseline.

Sources & References

- index.dev. “Replit Usage Statistics 2026: Growth, Users, and AI Impact.” index.dev/blog/replit-usage-statistics. Accessed 16 April 2026.

- shipper.now. “40+ Lovable Statistics of 2026.” shipper.now/lovable-stats. Accessed 16 April 2026.

- vals.ai / arXiv 2603.04601. “VibeCodingBench v1.1.” vals.ai/benchmarks/vibe-code. 2026. Accessed 16 April 2026.

- arXiv 2509.22237. “FeatBench: Evaluating Coding Agents on Feature Implementation for Vibe Coding.” arxiv.org/abs/2509.22237. September 2025. Accessed 16 April 2026.

- METR. “Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity.” July 2025. Cited in vibecoding.app and Medium/@aftab001x.

- hellocrossman.com. “Cursor vs Replit vs Bolt vs Lovable: Honest 2026 Comparison.” hellocrossman.com. March 2026. Accessed 16 April 2026.

- vibecoding.app. “Best Vibe Coding Tools 2026: Compared & Ranked.” vibecoding.app/blog/best-vibe-coding-tools. 2026. Accessed 16 April 2026.

- Medium/@aftab001x. “The 2026 AI Coding Platform Wars: Replit vs Windsurf vs Bolt.new vs Lovable.” medium.com/@aftab001x. 2026. Accessed 16 April 2026.

- Reddit r/nocode (Open-Editor-3472). “I burned $700+ and 3 months testing 11 AI app builders.” reddit.com/r/nocode/1rw8r7s. 17 March 2026. Accessed 16 April 2026.

- TechCrunch. “Lovable says it’s nearing 8 million users.” techcrunch.com. November 2025. Accessed 16 April 2026.

- TechCrunch. “Eight months in, Lovable crosses $100M ARR.” techcrunch.com. July 2025. Accessed 16 April 2026.

- shipper.now. “35+ Replit Statistics in 2026.” shipper.now/replit-stats. 2026. Accessed 16 April 2026.

- blog.replit.com. “Replit 2025 in Review.” blog.replit.com. 2025. Accessed 16 April 2026.

- Semafor. “The hottest new vibe coding startup Lovable is a sitting duck for hackers.” semafor.com. May 2025. Accessed 16 April 2026.

- gocodelab.com. “Vibe Coding Tools for Non-Developers.” gocodelab.com. April 2026. Accessed 16 April 2026.

- nocode.mba. “Bolt vs Lovable 2026.” nocode.mba/articles/bolt-vs-lovable. 2026. Accessed 16 April 2026.

- Second Talent. “Top Vibe Coding Statistics 2026.” secondtalent.com. 2026. Accessed 16 April 2026. Note: no primary sources disclosed.

- thetoolnerd.com. “Replit vs Bolt vs Lovable: Hands-On Review.” thetoolnerd.com. 2025. Accessed 16 April 2026.

- arXiv 2603.04601. “VibeCodingBench: Benchmarking AI Coding Agents on Real-World Workflows.” arxiv.org/html/2603.04601v1. 2026. Accessed 16 April 2026.

Last updated: 16 April 2026 | By CodingWithVibe Research Team